Privacy can be defined as the right of individuals, groups, and institutions to determine for themselves when, how, and to what extent information about them is communicated to others. The concept of privacy has three dimensions: (i) Personal privacy. Protecting a person from excessive and undue interference, such as physical searches and information that violates their moral sense. (ii) Territorial privacy. There is a physical area surrounding a person that cannot be violated without their consent (safeguards: laws that address search warrants issued by intruders). (iii) Informational privacy. This deals with the selective collection, compilation, and dissemination of information. Technologies are needed to: (i) Protect user identities by providing anonymity, pseudo-anonymity, non-linking, and non-observability of users. (ii) Protect the identities of users by providing anonymity and pseudo-anonymity of data subjects. (iii) Protect the confidentiality and integrity of personal data. Anonymity ensures that a user can use a resource or service without revealing their identity. There can be sender anonymity if the user is anonymous in the role of sender of a message, or receiver anonymity if the user is anonymous in the role of receiver of a message. Non-observability ensures that a user can use a resource or service without others being able to see which resource or service is being used. There can be non-linking of the sender and receiver, so that the sender and receiver cannot be identified as they communicate with each other. Non-linking ensures that a user can use resources and services without others being able to link or connect these uses. Pseudo-anonymity ensures that a user acting under a pseudonym can use a resource or service without revealing their identity. Pseudonyms can be classified, in ascending order, according to their level of protection, into: (a) Personal pseudonyms, which can be further classified according to their level of protection into public personal pseudonyms, non-public personal pseudonyms, and private personal pseudonyms. (b) Role pseudonyms, which can be further classified according to their level of protection into business pseudonyms and transaction pseudonyms. Pseudonyms can be classified according to their generation into: (a) Self-generated pseudonyms, reference pseudonyms, cryptographic pseudonyms (operational separation of duties, with symmetric encryption, the key K = K1 + K2, K1 held by a security administrator and K2 by a data protection officer), and one-way pseudonyms. Anonymization (or de-personalization) can be: (i) Perfect. If the data is anonymized so that the data subject is not identifiable. (ii) Practice. Modification of personal data so that information concerning persons or material circumstances cannot or can only be attributed to an identified or identifiable individual using a disproportionate amount of time, expense, and effort. There are risks in re-identification. Data records collected for statistical purposes contain identity data (name, address, ID number, social security number, etc.), demographic data (sex, age, nationality, etc.), and analytical data (habits, illnesses, etc.). The degree of anonymity of statistical data depends on the size of the database and the entropy of the demographic data attributes, which can serve as additional knowledge for an attacker. The entropy of demographic data attributes depends on the number of attributes, the number of possible values for each attribute, the frequency distribution of the values, the dependencies between attributes, etc. The components of a privacy policy are: site identification, scope, contact information, types of information collected (including cookie information), how the information is used, conditions under which the information may be shared, information on how to opt out, information on access, information on data retention policies, and information on sealing programs.

Covert Channels

Covert ChannelsA covert channel (also called a leakage path or covert channel) is a channel that leaks information from a protected area (module/program) to an unprotected one; a typical environment is a system with sensitive information. The most important characteristic of a covert channel is its bandwidth, measured in bps. Covert channels can use almost any means for information transfer, for example, steganography, digital watermarks (or DWM), and fingerprinting. The two main types of covert channels are: (1) Storage Channels. For example, consider a process A writing to an object and a process B reading from it. Possible storage channels include: (i) Object attributes, for example, file attributes (length, format, modification date, ACL - Access Control List, etc.). (ii) Object existence. For example, checking the existence of a certain file. (iii) Shared resources. For example, using the print queue (full or empty).

(2) Timing Channels. For example, consider process A, which creates some effect, and process B, which measures time. Possible timing channels include: (i) Varying the CPU load, for example, at 1-millisecond intervals (this works well if there are only two processes). (ii) Making program execution dependent on program data. Timing channels tend to be noisy and difficult to detect. A possible countermeasure is to deny access to the system clock, although the attacker might have their own clock.

Information Concealment. Steganography.

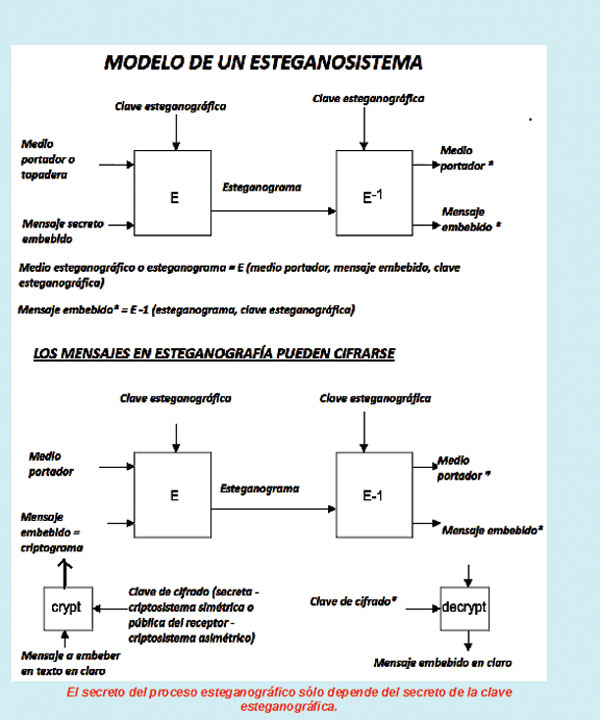

Information Concealment. Steganography.Concealing one's own information is a general concept that includes steganography (or covert communication) and digital watermarks (DWM). Steganography means concealing the writing (just like cryptography), but the difference is that the secret lies in the existence of the message itself. This is achieved by embedding a secret message or document within an open carrier message or cover. Steganography, therefore, allows the transmission of secret messages using innocuous carriers so that the existence of the embedded message cannot be detected. Examples of covert carriers include images, audio, video, and text data. Digital watermarks (DWM) involve embedding a message within a cover message, usually to deter intellectual property theft. In this case, the cover message is a digital image, and the secret message is a copyright notice.

Throughout history, many steganographic techniques or methods have been developed. For example, given a text, the steganographic key is to "take the second letter of each word" or to randomly select letters according to a formula in finite arithmetic whose modulus is the number of characters in the text. Hiding the bits of a secret message in the LSB (Least Significant Bit) of the bytes that make up each pixel of a sparse region of an image (which acts as a cover; in 24-bit images per pixel, three secret bits are stored, one in each byte) starting from a certain pixel (a parameter that is part of the steganographic key) and with a jump between pixels determined by a pseudo-random function with a seed that constitutes the other part of the key; this can be considered a method based on the Cardan lattice. Another mechanism involves using certain fields in the encapsulation headers of a PDU (Protocol Data Unit; frame/L2, packet/L3, TCP segment/L4, UDP datagram/L4, email message/L5, etc.). Another technique consists of encoding the secret message based on the spacing between PDUs; a short spacing represents a "bit one," and a longer spacing represents a "bit zero." Compression can affect the secret message hidden in an image depending on the image format: (i) If the compression is lossless (GIF, BMP), the hidden information remains intact. (ii) If the compression is lossy (JPEG), the integrity of the hidden image may not be maintained. One possible solution is to replicate the message in several parts and even use data correction codes in case the message is modified.

Final Considerations

Final ConsiderationsOur research group has been working for over twenty years on covert channels and technologies to conceal not only the meaning but also the information itself, both at the level of data transmission and the storage of information and knowledge in databases and other information repositories.

This article is part of the activities carried out within the LEFIS-APTICE project (funded by Socrates, European Commission).

Bibliography

- Areitio, J. “Information Security: Networks, Computing and Information Systems”. Cengage Learning-Paraninfo. 2009.

- Areitio, J. “Security Considerations Regarding RFID Technology”. Conectrónica Magazine. No. 105. March 2007.

- Areitio, J. “Analysis Regarding Technologies for Information Concealment”. Conectrónica Magazine. No. 109. July-August 2007.

- Areitio, J. “Analysis Regarding Forensic Security, Anti-Forensic Techniques, Incident Response and Digital Evidence Management”. Conectrónica Magazine. No. 125. March 2009.

- Grimvall, G. et al. “Risk in Technological Systems”. Springer. 2009.

- Bettini, C. et al. “Privacy in Location Based Applications: Research Issues and Emerging Trends.” Springer. 2009.

Author:

Prof. Dr. Javier Areitio Bertolín – E.Mail:

Professor at the Faculty of Engineering. ESIDE.

Director of the Networks and Systems Research Group. University of Deusto.