Dimensions of privacy. Characterization of privacy and security

The use of anonymity/pseudonyms is a key and expanding approach in various areas of computer science and telecommunications. Some dimensions of privacy are: (i) Persistence. The information generator should be able to control how long the information persists after its publication (execution/transmission/storage). (ii) Content leakage. The information generator should be able to control how much information is leaked based on the published content. (iii) Eavesdropping. The information generator should be able to control which other parties can read the published (executed/transmitted/stored) information. (iv) Correlation capability. The information generator should be able to control the ability of eavesdroppers to correlate their data. (v) Replays. The information generator should be able to control whether eavesdroppers can repeat their published information, as well as whether the repetition is private and whether the ability to repeat persists. (vi) Channel leakage. The information generator should be able to control how much partial information is leaked based on the chosen communication channels. Anonymity means that the agent performing the operation has no observable persistence characteristics. For example, turning on a radio is a way to receive information anonymously (although not perfectly, as a Tempest attack could be carried out). Reading the classifieds in a particular newspaper can be another example of imperfect anonymity, since someone could track who buys that particular newspaper. In general, broadcast channels/media are often a good approach to achieving anonymity. Anonymity and the use of pseudonyms enable location privacy. Location describes the actual physical connection to the communication medium. Anonymity is more private than the use of pseudonyms because it is less susceptible to tracking. Although having a pseudonym does not necessarily imply that the location is public, the pseudonym can be a response block in a Mixnet network or even a key pair that a user uses to sign their entire document.

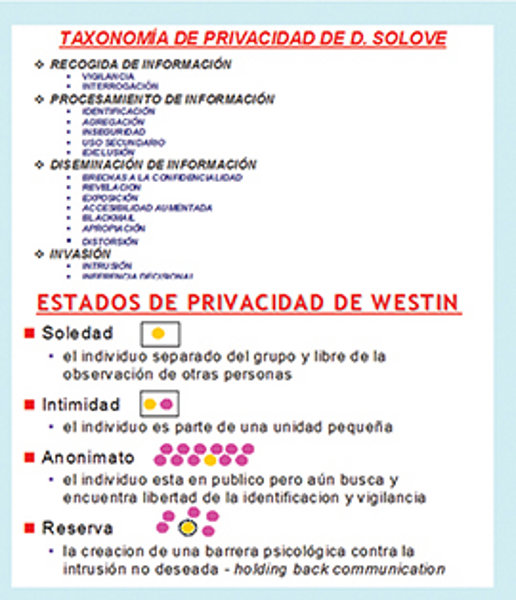

Information privacy is a property of security and a key element in its construction. At the individual level, it enables freedom from intrusion, profiling, and manipulation; it protects against crimes such as identity theft; it provides flexibility in accessing and using content and services; and it allows individuals to control their own information. Currently, information security and privacy can be seen not as opposing processes but as complementary ones, and as potential forces that allow us to combine efforts in the arduous task of protecting against the ever-increasing range of risks of all kinds. Regarding privacy and security, it can be said that "unity is strength." The current state of science and technology makes it possible to rigorously address this symbiosis that seemed unthinkable years ago. Privacy and security may initially appear to be two sides of the same coin, essential in our information and knowledge society, since they share many common mechanisms such as cryptography and steganography, but privacy manages to go a step further. Information security protects data and resources both physically and technologically, ensuring confidentiality (limited access), integrity/authentication (ensuring data is authentic, unmodified, and complete), availability (resources accessible to legal entities), non-repudiation (preventing denial of actions taken), authorization (access control/identity management), and auditability/accountability (logging, penetration testing/ethical hacking, reviewing application code using formal methods, monitoring activities), among other things.

Information privacy aims to protect data in relation to how it is defined and used. This increasingly involves technologies, policies that define and classify data and its use, and regulations and laws (LOPD-RMS, HIPAA, GLBA, FERPA, FCRA, COPPA, etc.). Information privacy is related to data protection and enables freedom of choice, personal control (allowing individuals to maintain spheres of solitude, privacy, control, autonomy, intimacy, etc.), and informational self-determination (privacy implies the right to control one's personal information and the ability to determine whether and how one's information is collected and used). It allows for control over the collection, use, and disclosure of any recorded information about a personally identifiable individual. Privacy is not the same as security; however, security is vital to privacy, and privacy encompasses a much broader set of protections than just security.

Information privacy is the right of individuals and organizations to deny or restrict the collection and use of information/knowledge about them. It is difficult to maintain nowadays because data is stored online. Our personal data is captured not only through our use of computers but also from an increasing number of other analog/digital capture points. Freedom would evaporate without privacy. Privacy is not just about keeping something hidden; it preserves spheres of reserve, autonomy, personal control, solitude, and so on. The three key aspects of privacy are freedom from intrusion (being left alone), control over information about oneself, and freedom from all types of surveillance (tracking, monitoring, and surveillance). Some privacy problems arise when personal information appropriate for one context is shared inappropriately in another (contextual integrity); the user must be able to judge the context. It is now imperative to provide greater control over shared personal information (where it is critical to know what information is being processed and with whom it is being shared). There is a growing number of technical properties of privacy: anonymity/pseudo-anonymity (the service provider can observe access to the service but cannot observe the user's identity), non-correlation (between sender and receiver), non-observability (presence is not visible), transcorded transfer (the service provider can identify the user but cannot observe details of service access such as which data records were accessed, which keywords were used, which data was downloaded, etc.), plausible deniability, location privacy, census resistance (for which pseudonyms, privacy wizards (for example, on social networks), cookie management, ad blocking, malware management, web/XML filtering, etc., are used).

The main areas of concern regarding privacy are:

(1) The invisible collection of information, that is, the collection of personal information about someone without their knowledge (natural or legal person). Personally Identifiable Information (PII) is any information held by an entity that identifies or describes an individual. Sensitive PII is the term associated with: a national identity card, financial data, health/medical data, biometrics, driver's license, passport, credit card, real estate holdings, etc. Sensitive data includes passwords, economic forecasts, intellectual property information, financial reports, etc. The mass of information collected surreptitiously is growing daily and encompasses an increasing number of areas: surveillance (TV/CCTV cameras and webcams in parking lots, shops/supermarkets, banks, hospitals, airports using X-rays and millimeter waves to see under people's clothing, streets, roads, various buildings, etc.), tracking cookies while browsing the web, bank and credit cards, caller ID in telephony, and P2P communications. Monitoring and traceability based on GPS (Global Positioning Systems)/Galileo/GLONASS, vehicle black boxes, Wi-Fi/WLAN/WCAN, RFID/IoT/WBAN, Bluetooth/Zigbee/WPAN, WiMAX/WMAN, 2G-2.5G-3G/GSM/GPRS/UMTS/HSDPA mobile phones, etc. All this information results in databases of individuals, consumers, etc., in areas such as purchase records, web browsing activity, social media member lists, address change forms, lists of debtors, people with mortgages, people with homes, people with traffic violations, etc. Smart energy meters allow real-time consumption data to be sent to the service provider, enabling them to infer private information about individuals, such as when they are home, their routines (sleeping, eating, watching TV, using the washing machine), and changes in routine such as the arrival of visitors.

(2) Secondary use refers to the use of personal information for a purpose other than that for which it was provided. This includes: (i) Data mining. This involves searching and analyzing large datasets to find patterns and develop new information and/or knowledge. The problem of inference in databases arises when confidential information can be obtained from data released by unauthorized users. The goal of Privacy-Preserving Data Mining (PPDM) is to develop algorithms that modify the original data so that private data and knowledge remain private even after the data mining process. (ii) Computer matching. This involves combining and comparing information from different databases (for example, using national identity cards, photographs, or social security numbers to identify records). (iii) Computer profiling. This involves analyzing data from computer files to determine the characteristics of people most likely to be involved in a certain behavior. In the business world, profiling allows companies to determine a consumer's propensity for a product or service. In law enforcement, it allows for the creation of profiles of potential criminals/terrorists. In healthcare, it helps identify the tendency to contract diseases due to genetics, environment, and other factors. The secondary use of consumer information is increasing daily, with a growing number of areas observed: data mining, telemarketing, web advertising, mass marketing, unsolicited email, etc. Regarding the chicken-or-the-egg question, it can be argued that information privacy can be the foundation for building more sophisticated information security. The antagonistic relationship between privacy and security, which held that increased security leads to decreased privacy and vice versa, has now been superseded, as we can conceive of security and privacy as mutually reinforcing and complementary processes. The need for a compromise between privacy and security is evident, given that poorly designed surveillance can lead to abuse. Privacy enhances security by taking it a step further. For example, VPN (Virtual Private Network) technology isn't truly private, and security protocols like SSH and SSL/TLS don't provide privacy. Privacy helps by implementing anonymity mechanisms to defend against attacks such as traffic analysis.

Identifying paradigms in privacy. Privacy by design

Identifying paradigms in privacy. Privacy by design

Two extreme privacy paradigms are:

(1) Strict privacy. The subject provides the minimum amount of data possible and reduces the need to rely on other entities as much as possible. The subject is an active security user. The goal of data protection is data minimization, protection against surveillance, interrogation, aggregation, and identification; for this purpose, PET tools, for example, can be used. The adversary may be located within the communications provider or the entity that holds the data.

(2) Trust-based privacy. This is a less rigorous approach: the data subject provides their data, and a controlling entity is responsible for its protection. The data subject has lost control of their data, and in practice, it is very difficult for them to verify how their data is collected and processed. The objective of data protection revolves around the principles of purpose, consent, and data security. It is necessary to trust data controllers in terms of their honesty and competence, and the best is expected of them. According to Daniel Solove, a society without privacy protection would be oppressive, unjust (for violating a right), and even irrational, reflecting a low level of development and transgressing a key social norm. According to Diffie and Landau, private communication is fundamental to national security and our democracy. A report by the AAAS (American Association for the Advancement of Science) indicates that anonymous online communication is a morally neutral technology. In the US, anonymous communication is considered a constitutional right (Second Amendment), and therefore a solid human right. According to the European Union, a misguided understanding of security can erode the values of a democratic society, such as justice, freedom of choice and agency, equality, and the freedom to go where and when one wishes. To determine a balance between perfect privacy and the complete loss of privacy, two concepts emerge: (i) The notion of the individual: What individuals should be allowed to do to achieve their full potential. (ii) The notion of society: For a high degree of individuality, we need a liberal and pluralistic society.

Privacy by design is related to determining what data is necessary for the provision of a service under the principle of data minimization, since there is no need to collect data indiscriminately. Several principles of privacy by design have been defined: (i) It should be proactive, not reactive, and preventative, not remedial. (ii) It should be embedded in the design. (iii) It should exist by default. (iv) There must be visibility and transparency. (v) Respect for user privacy must always be present. (vi) There must be end-to-end lifecycle protection. (vii) It must include full functionality so that the results are positive, not neutral. Privacy and security by design is an outstanding goal in our society that must be promoted at all levels (cultural awareness, through laws, using technology and management, etc.) since it would greatly reduce existing, difficult-to-solve problems.

Privacy Policies.

Three possible user-facing privacy policies are: (i) Opt-in (default: no consent). This allows the use of personal information within an organization but requires the user's explicit consent before personal information can be disclosed to third parties outside the organization. The 1995 EU Data Protection Directive emphasizes the opt-in model. (ii) Opt-out (default: consent). This policy gives the user the option to prohibit the sharing of their non-public personal information with third parties. An organization that attempts to share a user's non-public information with third parties must give the user an opportunity to refuse permission. Organizations can share personal information with third parties if the user does not restrict the use of their personal information. It has been found that less than 8% of users typically unsubscribe from mailing lists. The 1999 GLBA uses the opt-out philosophy. (iii) Blanket opt-in. This is related to anonymity.

A proper privacy policy should answer questions such as: What type of information is being collected (email addresses, postal addresses, server/cookie information, etc.)? If there is more than one method of data collection, what other methods will be used? Be very specific: How will the collected information be used? With whom will this information be shared? Where will the collected information be stored locally or hosted remotely, and what level of security will be used to protect it? What is the specific policy if someone wishes to unsubscribe? Clearly specify what will be done if a user does not wish to receive any future communications; clearly specify what will be done if a user does not want their personal information included in a database.

Final Considerations

Our research group has been working for over fifteen years on the evaluation, synthesis, analysis, and risk assessment of information privacy. Methodologies, mechanisms, and services have been developed to promote privacy as a key factor in improving security.

This article is part of the activities carried out within the LEFIS-APTICE project (funded by Socrates, European Commission).

Bibliography

- Areitio, J. “Information Security: Networks, Computing and Information Systems”. Cengage Learning-Paraninfo. 2010.

- Areitio, J. “Analysis of Spam”. Conectrónica Magazine. No. 104. February 2007.

- Gutwirth, S., Poullet, Y., De Hert, P., Terwangne, C. and Nouwt, S. “Reinventing Data Protection”. Springer. 2009.

- Flegel, U. “Privacy Respecting Intrusion Detection”. Springer. 2007.

- Solove, DJ “Understanding Privacy”. Harvard University Press. 2010.

- Wacks, R. “Privacy: A Very Short Introduction”. Oxford University Press. 2010.

- Nissenbaum, H. “Privacy in Context: Technology, Policy and the Integrity of Social Life”. Stanford Law Books. 2009.